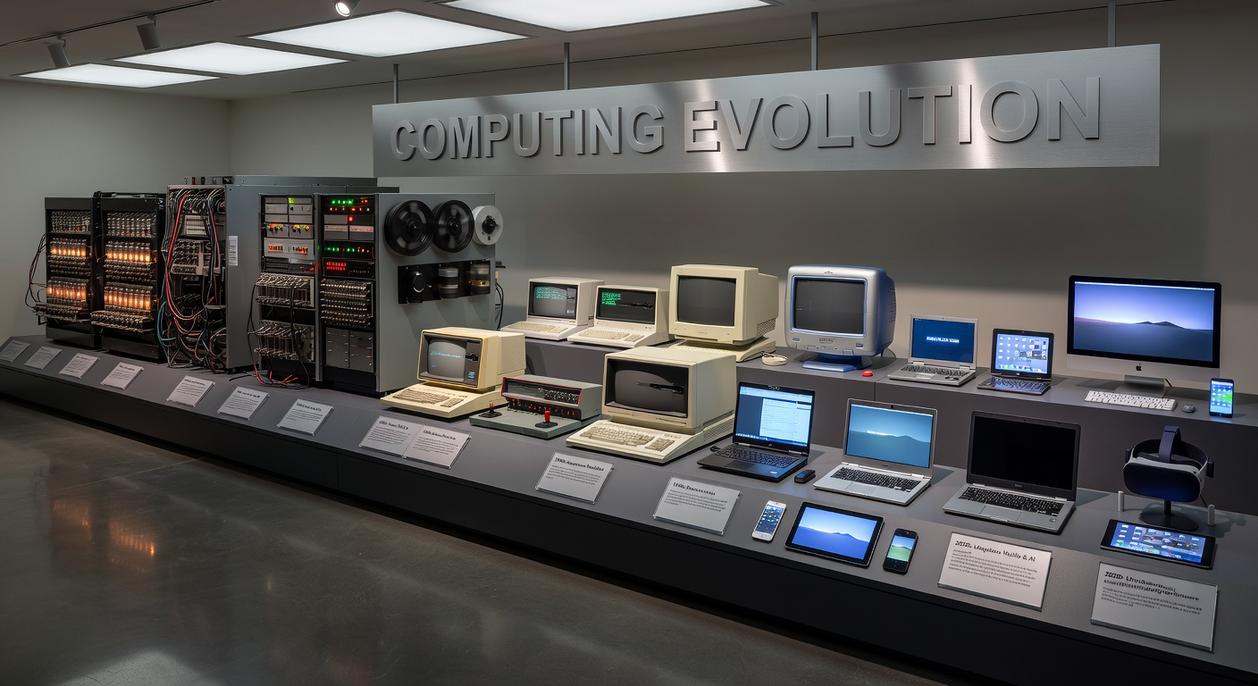

Computers once filled entire rooms, humming with limited power and vast mechanical complexity. Today, devices in our pockets outperform those early machines by unimaginable margins. This article explores the remarkable journey of computer architecture evolution, tracing how breakthroughs in hardware design, processing models, and system integration transformed computing from centralized giants into sleek, distributed powerhouses. By examining the engineering trade-offs and architectural shifts that defined each era, we clarify how innovation steadily increased performance, efficiency, and accessibility. Grounded in core computer science principles, this guide provides the historical foundation you need to understand both modern systems and the future of computing.

The Dawn of Computing: Vacuum Tubes and the Von Neumann Architecture

As we explore the evolution of computer architecture over time, it’s fascinating to see how advancements in hardware have influenced the development of complex software systems, including innovative tools like Software Keepho5ll that push the boundaries of what we can achieve with modern computing.

First-generation computers like ENIAC ran on thousands of vacuum tubes—glass components that controlled electrical flow to perform calculations. Think of them as oversized, fragile light bulbs that powered logic instead of living rooms. When one failed (and they often did), the whole system could stall.

A vs B: Early Computing Designs

- Vacuum Tube Machines: Massive, power-hungry, room-sized systems requiring constant maintenance. Reliable? Not quite.

- Von Neumann Architecture: A stored-program design where data and instructions share the same memory, simplifying reprogramming and forming the backbone of modern systems.

Critics argue vacuum tube systems were inefficient dead ends. True, they consumed enormous electricity—ENIAC used about 150 kilowatts (U.S. Army archives). But without them, foundational logic gates and binary processing models wouldn’t exist.

The Von Neumann model solved a core bottleneck: rewiring hardware for every task. Instead, instructions lived in memory—an idea central to computer architecture evolution.

Yes, these machines were limited to government labs. But reliability had to come before scalability (crawl, then compute).

The Transistor Revolution: Mainframes and the Rise of Integrated Circuits

In the 1950s, the hum of vacuum tubes filled entire rooms, radiating heat you could almost taste in the air. Then came the transistor—a tiny switch that replaced bulky glass tubes and transformed computing from fragile spectacle into dependable machinery. A transistor is a semiconductor device that amplifies or switches electronic signals, and its arrival marked a decisive leap in computer architecture evolution.

Mainframes like the IBM System/360 embodied this shift. Imagine rows of steel cabinets, blinking lights winking like a sci‑fi set from Star Trek, and the steady clatter of punched cards sliding through readers. Their structure revolved around:

- Centralized processing power housed in climate‑controlled rooms

- Batch processing, where jobs queued patiently for execution

- Time‑sharing systems that let multiple users tap into one machine

Critics argue mainframes were rigid and elitist, accessible only to governments and corporations. That’s fair. Yet their reliability and throughput built the first true commercial ecosystems.

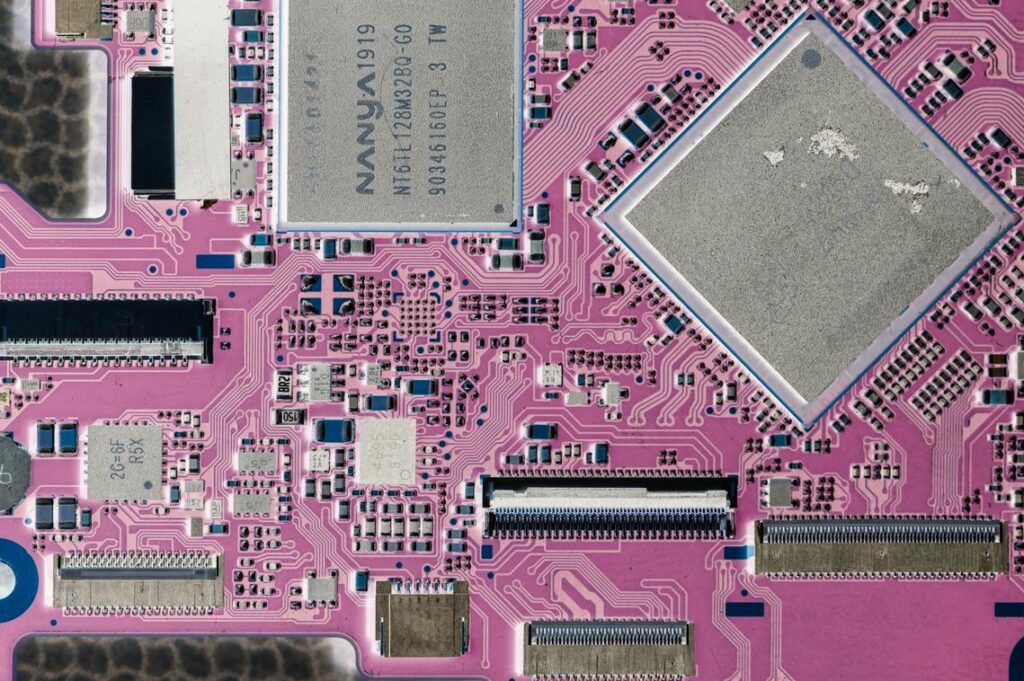

The introduction of integrated circuits—multiple transistors etched onto a single silicon chip—tightened the circuitry, reduced heat, and boosted speed. You could feel the difference: quieter operation, cooler hardware. Functionality was no longer enough. Efficiency became king.

The Microprocessor: How a Chip on a Desk Changed Everything

At its core, a microprocessor is a central processing unit (CPU) placed onto a single integrated circuit. Before 1971, CPUs filled circuit boards; then Intel released the 4004, shrinking an entire brain of a computer onto one chip. That shift didn’t just make machines smaller—it made them personal.

Initially, computing followed a centralized model. Massive mainframes sat in secured rooms, and users accessed them through terminals. Critics argue that mainframes were more efficient and easier to control (and in some enterprise cases, they still are). However, decentralization changed who had power. With microprocessors inside personal computers (PCs), individuals gained dedicated computing resources on their desks—no scheduling time slots required.

As a result, computer architecture evolution accelerated. The motherboard—essentially the city map connecting components—allowed CPUs, memory, and storage to communicate. Expansion slots such as ISA and later PCI standardized how hardware connected. This standardization mattered. It enabled a booming ecosystem of third-party graphics cards, sound cards, and networking devices. (Think LEGO blocks, but for engineers.) Pro tip: When upgrading older systems, always confirm slot compatibility before buying expansion cards.

Meanwhile, user interfaces evolved dramatically. Early command-line systems required memorizing text commands. Then graphical user interfaces (GUIs) introduced windows, icons, and a mouse—famously popularized by Apple and later Microsoft. This design shift made computing accessible to non-technical users for the first time.

To fully grasp why microprocessors matter, it helps to revisit understanding binary code the foundation of modern computing: https://gdtj45.com/understanding-binary-code-the-foundation-of-modern-computing/.

In short, one chip didn’t just shrink computers—it redistributed technological power worldwide.

Connectivity and Convergence: The Internet, Mobile, and System-on-a-Chip (SoC)

The rise of the internet didn’t just change how we communicate—it reshaped computer architecture evolution. As connectivity became essential, machines were redesigned to prioritize networking protocols like Ethernet and Wi-Fi. In simple terms, a protocol is a shared rulebook that allows devices to “speak” to each other. Without it, your laptop and router would be like two actors in different movies.

What’s in it for you? Faster communication, seamless cloud access, and real-time collaboration (yes, even your endless group chats benefit from this).

Then came mobile computing. Engineers faced a tough balancing act: deliver desktop-level performance inside a pocket-sized device without overheating or draining the battery. Power efficiency—measured as performance-per-watt—became the gold standard. The better this ratio, the longer your device runs without turning into a hand warmer.

At the center of this shift is the System-on-a-Chip (SoC): a single integrated circuit that combines the CPU (central processing unit), GPU (graphics processing unit), memory, and connectivity modules onto one chip.

The benefit is simple: more power, less space, lower energy use.

Key advantages include:

- Longer battery life

- Faster app performance

- Always-on connectivity

- Slimmer, lighter devices

This convergence enables powerful, connected experiences—streaming, gaming, AI assistants—anytime, anywhere.

The Next Architectural Leap: AI, Quantum, and Specialized Hardware

The journey through computer architecture evolution has shown you how each breakthrough solved the same core problem: pushing past physical limits to gain more power, speed, and efficiency. From vacuum tubes to SoCs, innovation has always been the answer to computational bottlenecks. Now, as silicon approaches its edge, AI accelerators and quantum systems represent the next decisive leap.

If you’re trying to stay ahead in a rapidly shifting tech landscape, understanding these shifts is no longer optional. Don’t get left behind—explore emerging hardware trends now and position yourself at the forefront of the next computing revolution.

Director of Machine Learning & AI Strategy

Jennifer Shayadien has opinions about core computing concepts. Informed ones, backed by real experience — but opinions nonetheless, and they doesn't try to disguise them as neutral observation. They thinks a lot of what gets written about Core Computing Concepts, Device Optimization Techniques, Data Encryption and Network Protocols is either too cautious to be useful or too confident to be credible, and they's work tends to sit deliberately in the space between those two failure modes.

Reading Jennifer's pieces, you get the sense of someone who has thought about this stuff seriously and arrived at actual conclusions — not just collected a range of perspectives and declined to pick one. That can be uncomfortable when they lands on something you disagree with. It's also why the writing is worth engaging with. Jennifer isn't interested in telling people what they want to hear. They is interested in telling them what they actually thinks, with enough reasoning behind it that you can push back if you want to. That kind of intellectual honesty is rarer than it should be.

What Jennifer is best at is the moment when a familiar topic reveals something unexpected — when the conventional wisdom turns out to be slightly off, or when a small shift in framing changes everything. They finds those moments consistently, which is why they's work tends to generate real discussion rather than just passive agreement.

Director of Machine Learning & AI Strategy

Jennifer Shayadien has opinions about core computing concepts. Informed ones, backed by real experience — but opinions nonetheless, and they doesn't try to disguise them as neutral observation. They thinks a lot of what gets written about Core Computing Concepts, Device Optimization Techniques, Data Encryption and Network Protocols is either too cautious to be useful or too confident to be credible, and they's work tends to sit deliberately in the space between those two failure modes.

Reading Jennifer's pieces, you get the sense of someone who has thought about this stuff seriously and arrived at actual conclusions — not just collected a range of perspectives and declined to pick one. That can be uncomfortable when they lands on something you disagree with. It's also why the writing is worth engaging with. Jennifer isn't interested in telling people what they want to hear. They is interested in telling them what they actually thinks, with enough reasoning behind it that you can push back if you want to. That kind of intellectual honesty is rarer than it should be.

What Jennifer is best at is the moment when a familiar topic reveals something unexpected — when the conventional wisdom turns out to be slightly off, or when a small shift in framing changes everything. They finds those moments consistently, which is why they's work tends to generate real discussion rather than just passive agreement.